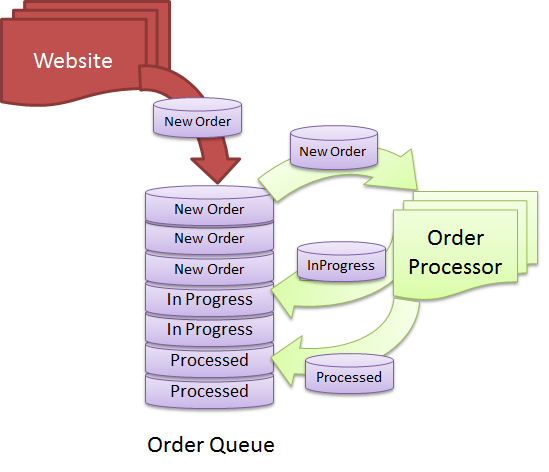

We have Queues everywhere. There are queues for asynchronously sending notifications like email and SMS in most websites. E-Commerce sites have queues for storing orders, processing and dispatching them. Factory Assembly line automation systems have queues for running tasks in parallel, in a certain order. Queue is a widely used data structure that sometimes have to be created in a database instead of using specialized queue technologies like MSMQ. Running a high performance and highly scalable queue using database technologies is a big challenge and it’s hard to maintain when the queue starts to get millions of rows queued and dequeued per day. Let me show you some common design mistakes made in designing Queue-like tables and how to get maximum performance and scalability from a queue implemented using simple database features.

Let’s first identify the challenges you have in such queue tables:

- The table is both read and write. Thus queuing and dequeuing impact each other and cause lock contention, transaction deadlocks, IO timeouts etc under heavy load.

- When multiple receivers try to read from the same queue, they randomly get duplicate items picked out of the queue, thus resulting in duplicate processing. You need to implement some kind of high performance row lock on the queue so that same item never gets picked up by concurrent receivers.

- The Queue table needs to store rows in certain order and read in certain order, which is an index design challenge. It’s not always first in and first out. Sometimes Orders have higher priority and need to be processed regardless of when they are queued.

- The Queue table needs to store serialized objects in XML or binary form, which becomes a storage and index rebuild challenge. You can’t rebuild index on the Queue table because it contains text and/or binary fields. Thus the tables keep getting slower and slower every day and eventually queries start timing out until you take a downtime and rebuild the indexes.

- During dequeue, a batch of rows are selected, updated and then returned for processing. You have a “State” column that defines the state of the items. During dequeue, you select items of certain state. Now State only has a small set of values eg PENDING, PROCESSING, PROCESSED, ARCHIVED. As a result, you cannot create index on “State” column because that does not give you enough selectivity. There can be thousands of rows having the same state. As a result, any dequeue operation results in a clustered index scan that’s both CPU and IO intensive and produces lock contention.

- During dequeue, you cannot just remove the rows from table because that causes fragmentation in the table. Moreover, you need to retry orders/jobs/notification N times incase they fail on first attempt. This means rows are stored for longer period, indexes keep growing and dequeue gets slower day by day.

- You have to archive processed items from the Queue table to a different table or database, in order to keep the main Queue table small. That means moving large amount of rows of some particular status to another database. Such large data removal leaves the table highly defragmented causing poor queue/dequeue performance.

- You have a 24×7 business. You have no maintenance window where you can take a downtime and archive large number of rows. This means you have to continuously archive rows without affecting production queue-dequeue traffic.

If you have implemented such queue tables, you might have suffered from one or more of the above challenges. Let me give you some tips on how to overcome these challenges and how to design and maintain a high performance queue table.

Read the article for details:

http://www.codeproject.com/KB/database/fastqueue.aspx

Please vote if you find this useful.

it doesn’t seem you’re comparing apples to apples, on your final query you don’t create a temp table to do the update, you could’ve done the exact same thing in the other queries, just update…from

I’d suggest a service bus like nservicebus over this approach

UPDATE … FROM (SELECT …) will only update the rows. It won’t return the rows as a result of the stored proc. The table variable (not temp table) is needed to return the selected rows along with the payload.

I agree with @Steve. NServiceBus is much better solution for this.

Define “better”

try this pattern: http://martinfowler.com/articles/lmax.html