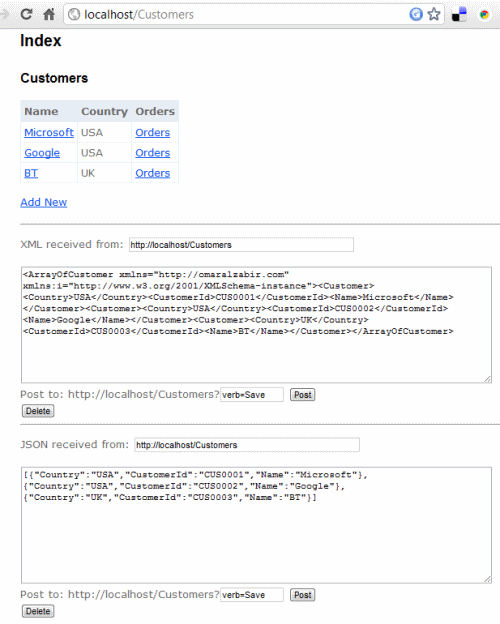

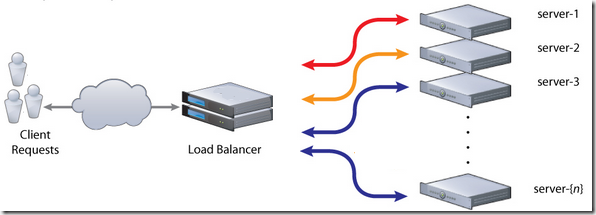

A truly RESTful API means you have unique URLs to uniquely represent entities and collections, and there is no verb/action on the URL. You cannot have URL like /Customers/Create or /Customers/John/Update, /Customers/John/Delete where the action is part of the URL that represents the entity. An URL can only represent the state of an entity, like /Customers/John represents the state of John, a customer, and allow GET, POST, PUT, DELETE on that very URL to perform CRUD operations. Same goes for a collection where /Customers returns list of customers and a POST to that URL adds new customer(s). Usually we create separate controllers to deal with API part of the website but I will show you how you can create both RESTful website and API using the same controller code working over the exact same URL that a browser can use to browse through the website and a client application can perform CRUD operations on the entities.

I have tried Scott Gu’s examples on creating RESTful routes, this MSDN Magazine article, Phil Haack’s REST SDK for ASP.NET MVC, and various other examples. But they have all made the same classic mistake – the action is part of the URL. You have to have URLs like http://localhost:8082/MovieApp/Home/Edit/5?format=Xml to edit a certain entity and define the format eg xml, that you need to support. They aren’t truly RESTful since the URL does not uniquely represent the state of an entity. The action is part of the URL. When you put the action on the URL, then it is straightforward to do it using ASP.NET MVC. Only when you take the action out of the URL and you have to support CRUD over the same URL, using three different formats – html, xml and json, it becomes tricky and you need some custom filters to do the job. It’s not very tricky though, you just need to keep in mind your controller actions are serving multiple formats and design your website in a certain way that makes it API friendly. You make the website URLs look like API URL.

The example code has a library of ActionFilterAttribute and ValurProvider that make it possible to serve and accept html, json and xml over the same URL. A regular browser gets html output, an AJAX call expecting json gets json response and an XmlHttp call gets xml response.

You might ask why not use WCF REST SDK? The idea is to reuse the same logic to retrieve models and emit html, json, xml all from the same code so that we do not have to duplicate logic in the website and then in the API. If we use WCF REST SDK, you have to create a WCF API layer that replicates the model handling logic in the controllers.

The example shown here offers the following RESTful URLs:

- /Customers – returns a list of customers. A POST to this URL adds a new customer.

- /Customers/C0001 – returns details of the customer having id C001. Update and Delete supported on the same URL.

- /Customers/C0001/Orders – returns the orders of the specified customer. Post to this adds new order to the customer.

- /Customers/C0001/Orders/O0001 – returns a specific order and allows update and delete on the same URL.

All these URLs support GET, POST, PUT, DELETE. Users can browse to these URLs and get html page rendered. Client apps can make AJAX calls to these URLs to perform CRUD on these. Thus making a truly RESTful API and website.

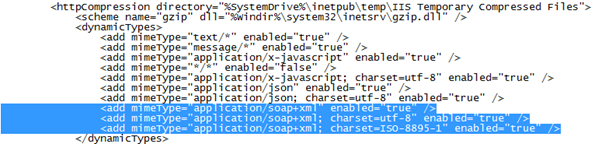

They also support verbs over POST in case you don’t have PUT, DELETE allowed on your webserver or through firewalls. They are usually disabled by default in most webservers and firewalls due to security common practices. In that case you can use POST and pass the verb as query string. For ex, /Customers/C0001?verb=Delete to delete the customer. This does not break the RESTfulness since the URL /Customers/C0001 is still uniquely identifying the entity. You are passing additional context on the URL. Query strings are also used to do filtering, sorting operations on REST URLs. For ex, /Customers?filter=John&sort=Location&limit=100 tells the server to return a filtered, sorted, and paged collection of customers.

Read my CodeProject article for details:

http://www.codeproject.com/KB/aspnet/aspnet_mvc_restapi.aspx

The source code is available here:

http://code.msdn.microsoft.com/Build-truly-RESTful-API-194a6253

Enjoy!